倫理 Rinri

A question of AI and Ethics.

It’s been a minute, but things with Disabling AI since Tokyo have been taking shape more formally. As I prep for formal research, Ethics have also been on my mind lately.

Image description by Claude: An AI-generated illustration of a smiling Black woman with robotic prosthetic limbs interacting with a glowing holographic panel in a futuristic urban plaza. The panel outlines an ethical AI governance framework alongside concepts for the future of disability, including bionic augmentation and neural interfaces. Signage in the background references an “Accessible City Hub” and “Global Innovation Symposium.”

As a graduate student researcher, I just finished up an extensive Ethics training full of ALL the questions we need to ask when working with human subjects. Even though my future research will primarily involve machines mimicking human subjects, it was still important to start with the basics and understand where consent and confidentiality come into play. And of course, there was a lot of identifying bias.

So much bias.

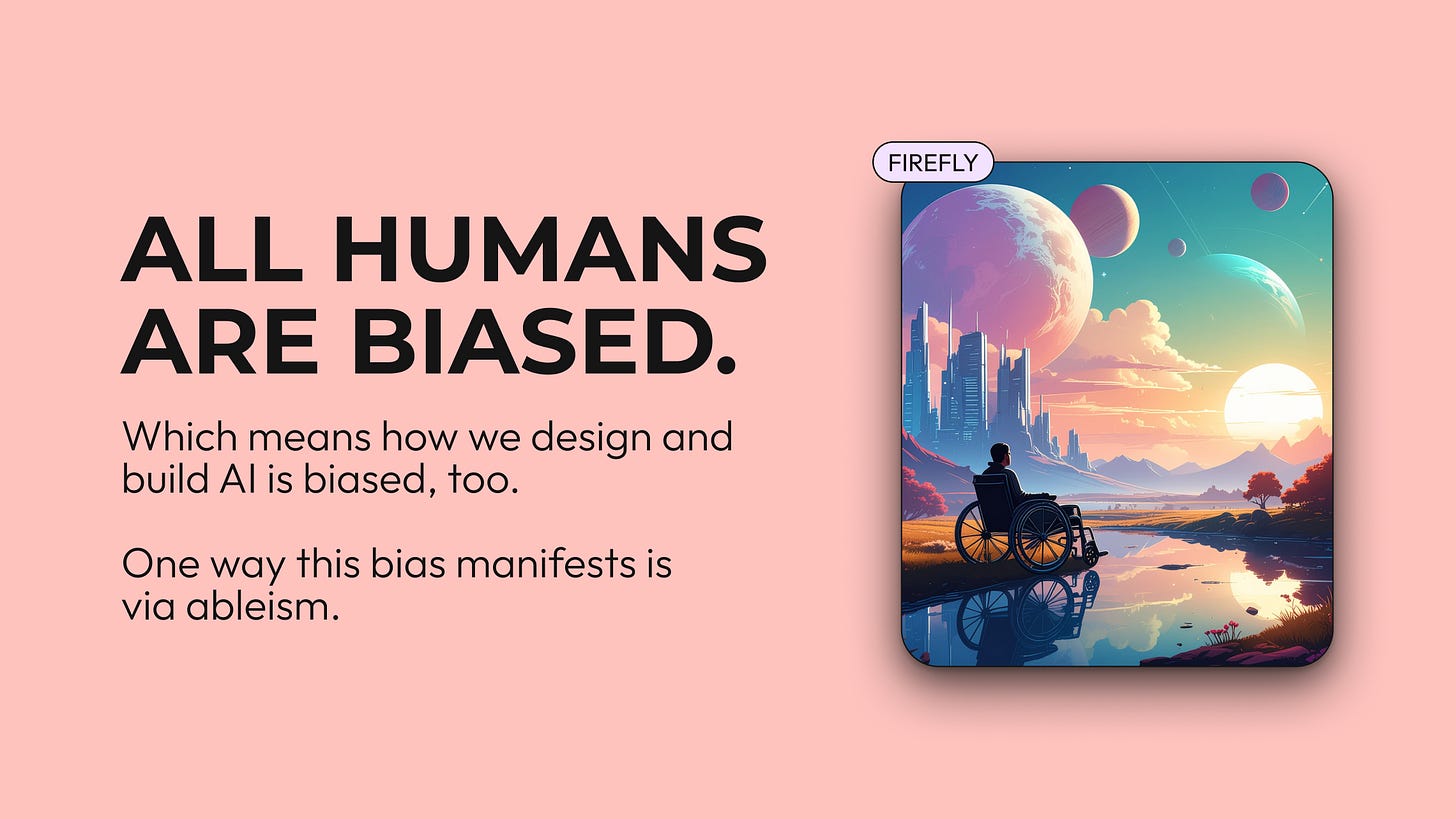

Image description by Claude: A presentation slide with a pink background. Large bold text on the left reads “All Humans Are Biased.” Smaller text below explains that AI design inherits human bias, and that one manifestation is ableism. To the right is a Firefly-generated illustration of a wheelchair user seen from behind, contemplating a glowing sci-fi cityscape reflected in water, surrounded by oversized planets and warm sunset colors.

Keeping up with.

This “Ethics Week” also made me deeply reflect on how AI makes it difficult to keep pace with both humans and machines. Ethically, that is.

For example, can you count the ethical decisions you make as a human on a daily basis? Take a minute and find those moments when you really have to reach inside and think:

What are we trying to achieve here? Am I bringing my bias into potential resolutions? Do I really feel that way? Where is that point of view coming from?

A few questions that may only happen a few times a day.

When it comes to working with AI, as I do daily, the number of times I have to ask ethical questions (or act more ethically) is honestly tenfold.

And that is alarming and warming at the same time.

Is this AI transparent enough? Who checked these outputs, and what bias might they have brought to their assessments? Is this a solution that works for everyone? How about people with low vision? Or for screen-reader users? Do we know where this data is from? Who was involved in this decision? Was it a group of diverse disciplines and voices? How does a user know their information is really private? How could it not be private? What happens when…something happens?

So many ethical questions, all of them leading to more ethical questions.

倫理的な質問。

I’m a word nerd at heart and read a lot. And inside the books I'm hanging out with currently (both the paper and digital ones) are margins full of question marks. Right now, I’ve got Inclusive Design for a Digital World, next to Qualitative Research & Evaluation Methods 4th Edition, and a pre-release of an AI Ethics in Action book (being a student has its perks).

Admittedly, the AI Ethics book is the fan fave right now, and it brings me back to high school, where I first met the concept of morality and folks like Aristotle and Kant. While I won’t divulge any of the pre-release goodness, being reminded of these OG ethicists did help me simplify Ethics a bit.

Ask questions, then ask them again. And when you have an answer, find another way to ask your question.

Then, maybe ask again.

Disabling AI in five questions.

While my next research will focus on text-based outputs involving LLMs and disability, my current adventures in GenAI representations of disability have led me down the path of, you guessed it, asking questions.

Five exactly.

Description by Claude. A hand of five cream/beige-colored question cards fanned out on a dark wood surface. The cards appear to be part of a structured critique or audit tool, likely related to disability representation in media or imagery. “Is the image perpetuating a monolithic view of disability?” with a small logo at the bottom — appears to be a stylized figure with glasses in orange and dark blue.

We’ll start fresh in the next post with Question 1, but in the meantime, I’ll ask:

What ethical questions are you battling every day? Are you asking those same questions of AI?

And if not, should you be?